Introduction

FuriosaAI’s Tensor Contraction Processor (TCP) is a massively parallel AI accelerator targeting inference workloads. High-level frameworks such as PyTorch and XLA abstract away memory layouts and hardware scheduling, but give programmers no control over either. Low-level kernel APIs give fine-grained control, but require reasoning in bytes and hardware addresses rather than tensors. TCP’s Virtual Instruction Set Architecture (Virtual ISA) bridges this gap: it lets programmers think in terms of tensors while directly managing memory allocation and tensor unit scheduling. This manual explains TCP programming through the Virtual ISA.

The manual walks through concrete examples, targeting two audiences: programmers writing Virtual ISA directly and compiler developers generating it. Basic Rust familiarity is assumed; see the language manual if needed.

Warning

Alpha Test Build: Experimental Software

This software is an early, experimental, and incomplete build intended strictly for technical evaluation and internal testing.

Before using this software for any production work, critical tasks, or for important data, you must consult with Furiosa engineers.

Your feedback is vital to our development. Please provide it.

Installation

Install two dependencies:

- Rust: Follow the official guide.

- Furiosa SDK: Follow the SDK documentation.

Your First Program

Create a new project:

cargo new --bin tcp-my-project

cd tcp-my-project

cargo add furiosa-visa-std tokio

Add rust-toolchain.toml:

[toolchain]

channel = "nightly-2025-12-12"

components = ["rustfmt", "clippy"]

Write main.rs:

#![feature(register_tool)]

#![register_tool(tcp)]

extern crate furiosa_visa_std;

extern crate tokio;

extern crate rand;

use rand::SeedableRng;

use rand::rngs::SmallRng;

use furiosa_visa_std::prelude::*; // provided by the Furiosa SDK

// Declare axis sizes

axes![A = 8, B = 512];

/// The main function running in host

#[tokio::main]

async fn main() {

// Acquire exclusive access to the TCP device

let mut ctx = Context::acquire();

// TCP has three memory levels:

// - Host: system memory

// - HBM (High-Bandwidth Memory): device's main memory

// - SRAM (on-chip scratchpad): the primary SRAM tier is called DM (Data Memory)

//

// Data flows: Host → HBM → DM → compute → DM → HBM → Host.

//

// Two DMA engines move data between these levels:

// - `ctx.pdma` (PCIe DMA): transfers between Host and HBM

// - `ctx.tdma` (Tensor DMA): transfers between HBM and DM

// Create tensor on host

// Tensors are parameterized by element type and mapping

// The mapping `m![A, B]` specifies `A` as the major axis and `B` as the minor axis

let mut rng = SmallRng::seed_from_u64(42);

let host: HostTensor<i8, m![A, B]> = HostTensor::rand(&mut rng);

// Transfer to device HBM using PCIe DMA engine

// HBM tensor has two dimensions: m![A] for chip and m![B] for intra-chip address

let hbm: HbmTensor<i8, m![A], m![B]> = host.to_hbm(&mut ctx.pdma, 0x1000).await;

// Launch kernel on device

// Host continues while kernel runs asynchronously, but the kernel synchronously occupies the device

launch(kernel, (&mut ctx, &hbm))

// Host waits for the asynchronous execution of the kernel to finish

.await;

}

#[device(chip = 1)] // Running on a single chip

fn kernel(ctx: &mut Context, hbm: &HbmTensor<i8, m![A], m![B]>) {

// Move to DM (Data Memory) in on-chip SRAM using Tensor DMA engine

let dm = hbm.to_dm::<m![1], m![A], m![B]>(&mut ctx.tdma, 0);

// ... perform computations ...

}Build and Test

TCP supports two execution environments, ordered from fastest iteration to production use:

# 1. CPUs (standalone Rust)

cargo build # Add --release for optimized builds, same below

cargo test

# 2. Real TCP devices

cargo furiosa-opt build

cargo furiosa-opt test

Development Tools

The TCP Software Toolchain (cargo furiosa-opt) provides utilities for developing, testing, and optimizing Virtual ISA programs on Furiosa chips.

It complements the Furiosa SDK’s compiler by giving developers fine-grained control over program behavior, whether the programmer writes Virtual ISA by hand or a compiler generates it.

The toolchain consists of four components:

- Compiler: Translates Virtual ISA into executable code for the chip.

- Interpreter: Executes Virtual ISA as native Rust programs for software simulation and debugging.

- Language Server: Enables IDE features (autocompletion, diagnostics, navigation) via Rust’s language server infrastructure.

- Schedule Viewer: Visualizes the execution timeline to help identify performance bottlenecks.

Book Organization

The rest of this book is organized in the following chapters:

- Hello, TCP!: How TCP programming works, introduced through worked examples covering element-wise operations and tensor contractions.

- Mapping Tensors: How logical tensors map to physical memory: axis layout, stride, padding, and tiling.

- Moving Tensors: How data moves between memory tiers (HBM, DM) and the Tensor Unit via Fetch, Commit, and DMA engines.

- Computing Tensors: How the Tensor Unit pipeline (Switching, Collect, Contraction, Vector, Cast, Transpose) transforms data each cycle.

- Scheduling: How to control the order and concurrency of operations across contexts.

- Kernel Examples: End-to-end examples showing how mapping, movement, computation, and scheduling combine into real kernels.

License

This documentation and the entire furiosa-opt repository are licensed under the Apache License Version 2.0.

Hello, TCP!

This chapter introduces TCP programming through worked examples. Each example builds a mental model of how computation maps to hardware, making the rest of this book easier to follow. The first two examples cover element-wise operations; the remaining three cover tensor contractions (dot product, GEMV, and GEMM), each adding one new hardware concept. Two additional examples (Blocked GEMM and Flash Attention) are outlined as stubs.

Mathematical Background

This section defines the two mathematical concepts that TCP is built to accelerate: tensors and their contractions.

Tensor

A tensor is a mapping from tensor index to its corresponding value.

To understand this, we must first define tensor’s shape.

Unlike other libraries where axis order encodes meaning (e.g., NumPy’s ndarray), we define tensor’s shape as an unordered set of named axes.

The shapes \(\{\texttt{N} = 4, \texttt{C} = 3\}\) and \(\{\texttt{C} = 3, \texttt{N} = 4\}\) identify the same tensor; axis names carry the meaning, not the position.

A tensor index is formed by specifying index value for each axes. For a tensor with shape \(\{\texttt{N} = 4, \texttt{C} = 3\}\), the valid indices will be: \(\{\texttt{N}: 0, \texttt{C}: 0\}\), \(\{\texttt{N}: 0, \texttt{C}: 1\}\), \(\{\texttt{N}: 0, \texttt{C}: 2\}\), \(\{\texttt{N}: 1, \texttt{C}: 0\}\), etc.

A tensor can behave like a multi-dimensional array of numbers. For example:

- 0D Tensor (Scalar): a single number like \(5.2\)

- 1D Tensor (Vector): a sequence like \([1, 2, 3]\) with one axis

- 2D Tensor (Matrix): a \(2 \times 4\) grid with two axes

- 4D Tensor: a batch of RGB images with shape \(\{\texttt{N} = 4, \texttt{C} = 3, \texttt{H} = 256, \texttt{W} = 512\}\)

Tensor Contraction

A tensor contraction is a operation on a tensor that takes two tensors, pair up specific axes that appears in both inputs, then sums the products of their elements along those axes.

Einsum notation is a compact way to write contractions: each input tensor is listed by its axis labels, and output axes follow the → arrow; any axis that appears in both inputs but not in the output is summed over.

| Operation | Formula | Einsum notation |

|---|---|---|

| Dot product | \(\sum_i x_i y_i\) | \(I, I \rightarrow 1\) |

| GEMV | \(y_i = \sum_j A_{ij} x_j\) | \(IJ, J \rightarrow I\) |

| GEMM | \(C_{ij} = \sum_k A_{ik} B_{kj}\) | \(IK, KJ \rightarrow IJ\) |

Every contraction can be decomposed into three steps: Broadcast, Multiply, and Reduce.

| Step | Dot Product (\(I, I \rightarrow 1\)) | GEMV (\(IJ, J \rightarrow I\)) | GEMM (\(IK, KJ \rightarrow IJ\)) |

|---|---|---|---|

| Broadcast | none (axes match) | \(x\) broadcasts across \(I\) | \(A\) across \(J\); \(B\) across \(I\) |

| Multiply | \(x_i \cdot y_i\) | \(A_{ij} \cdot x_j\) | \(A_{ik} \cdot B_{kj}\) |

| Reduce | \(\sum_i x_i y_i\) | \(y_i = \sum_j A_{ij} x_j\) | \(C_{ij} = \sum_k A_{ik} B_{kj}\) |

Tensor Contraction Processor

This section covers the hardware concepts needed to understand the examples: the processing unit hierarchy, memory tiers, tensor mapping types, and execution contexts.

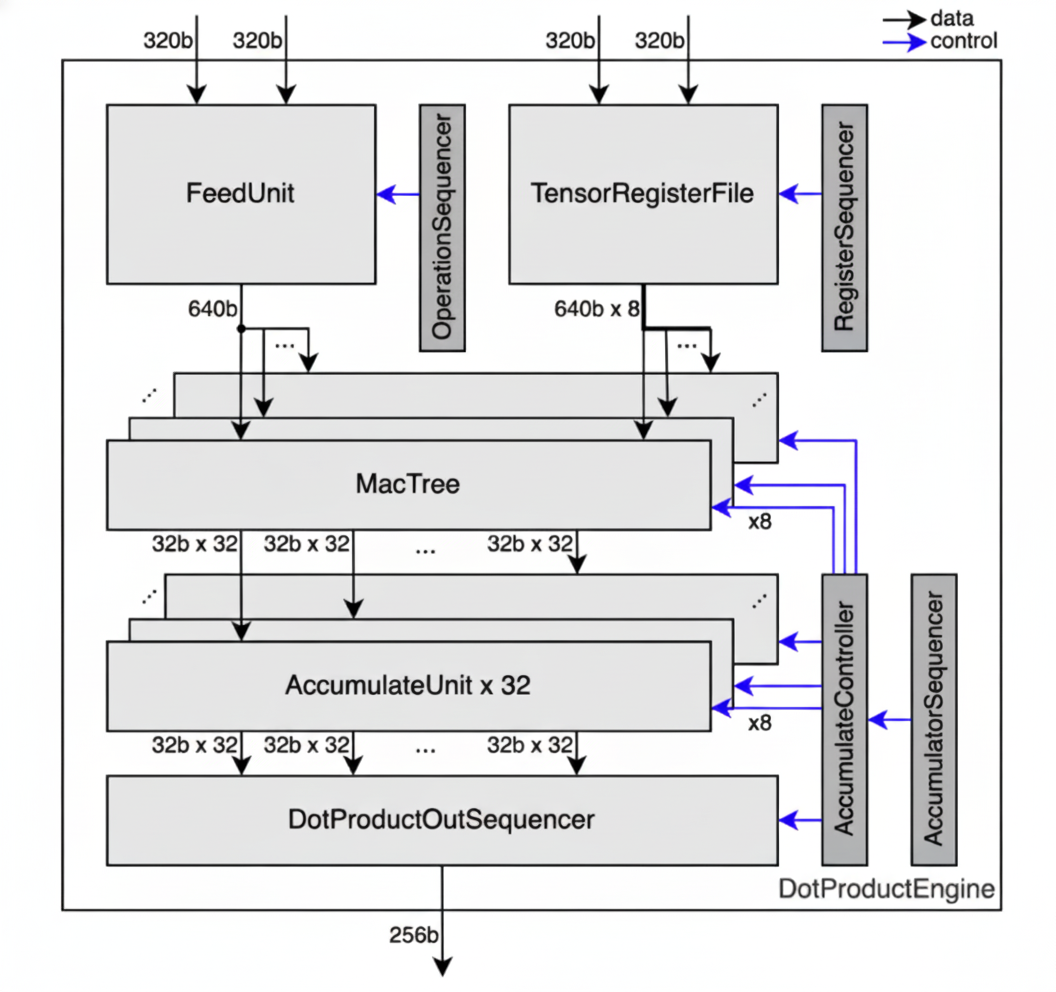

Processing Units

The TCP architecture accelerates these contractions by streaming tensor data through a hierarchy of parallel processing units.

| Level | Count (RNGD) | Role |

|---|---|---|

Chip | (system-dependent) | Top-level unit; holds HBM |

Cluster | 2 per chip | Groups 256 slices |

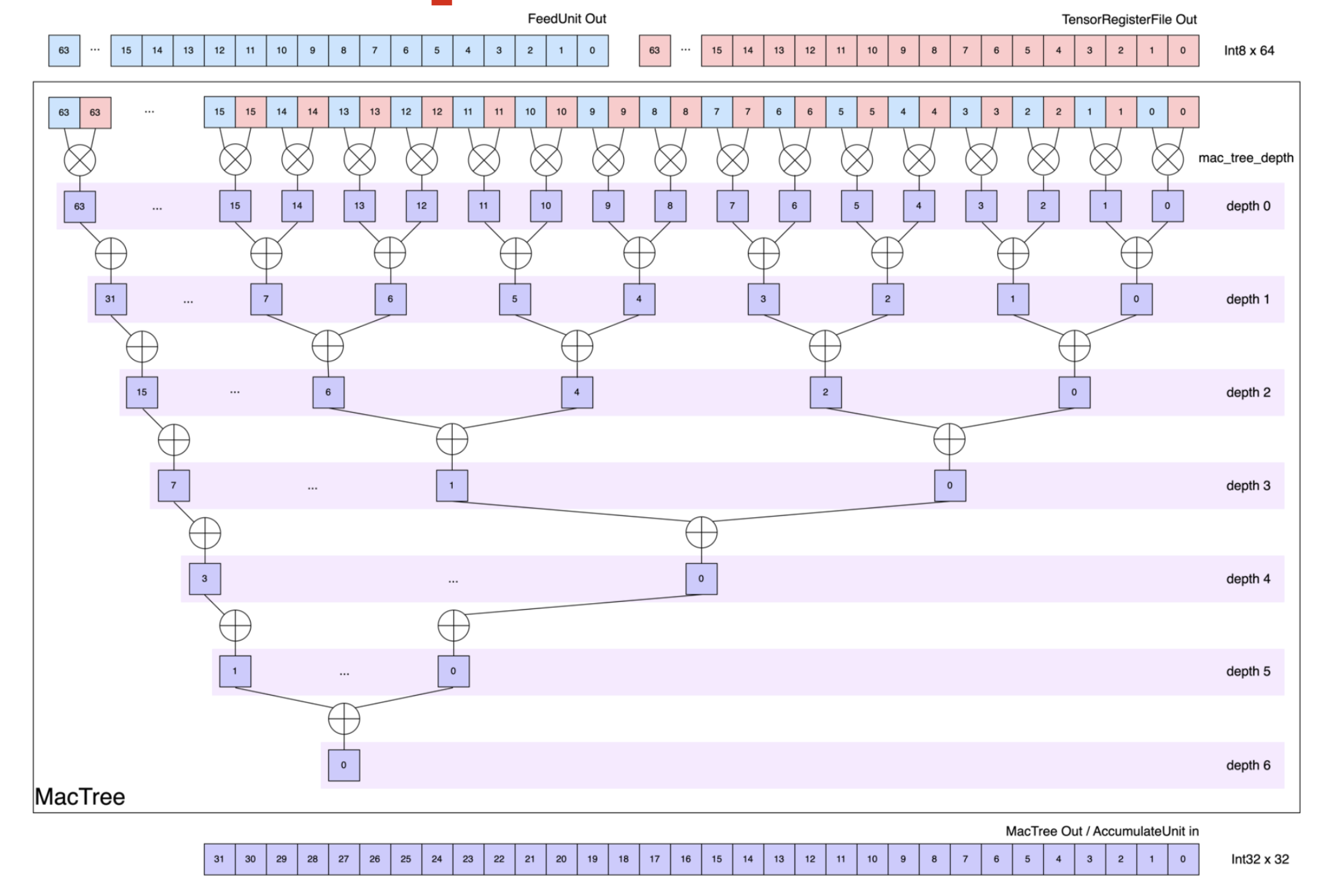

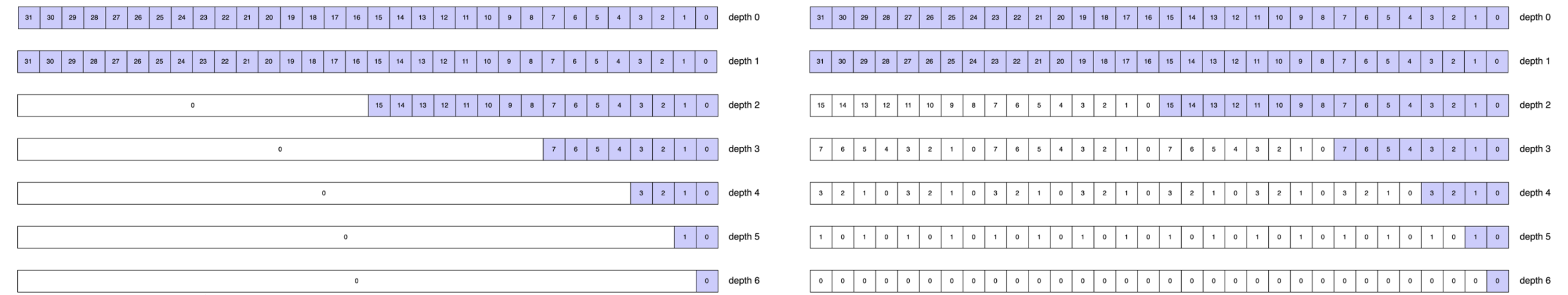

Slice | 256 per cluster | Runs one Tensor Unit: a Fetch → Switching → Collect → Contraction → Vector → Cast → Transpose → Commit pipeline |

Row | 8 per slice | One row of the Contraction Engine’s MAC (multiply-accumulate) array |

The Switch Engine connects slices, enabling data redistribution across the slice array.

Memory

| Type | Location | Capacity (RNGD) | Role |

|---|---|---|---|

HbmTensor | On-package | 48 GB, 1.5 TB/s | Long-term weight and activation storage |

DmTensor | On-chip SRAM | 256 MB total; 512 KB/slice | Primary working memory for computations |

TrfTensor | On-chip SRAM | 8 KB × 8 MAC rows / slice | Weight register file for Contraction Engine |

VrfTensor | On-chip SRAM | 8 KB / slice | Operand register file for Vector Engine |

Most alignment and capacity constraints in this book derive from the counts and capacities in these tables.

Tensor Mapping

TCP’s Virtual ISA exposes the hardware hierarchy through its type system.

Each tensor type encodes the element type and how each logical axis distributes across the hardware hierarchy.

For example, DmTensor<bf16, m![1], m![1 # 2], m![A / 8 # 256], m![A % 8]> (with axes![A = 2048]) represents a bf16 tensor on one chip, one of two clusters, distributed across 256 slices with 8 elements per slice.

TCP also introduces two kernel-specific parameters: Time indexes pipeline iterations; Packet indexes elements within each iteration.

The mapping expression (m![] macro and its operators) is used to express this distribution:

/splits by stride:A / 8gives 2048 / 8 = 256 indices, the “which slice” index.%gives the inner count:A % 8gives the 8 indices for each element the slice holds.#pads to the hardware unit count:# 256makes the slice count explicit.

Together, each element of A is mapped to a well-defined position within exactly one slice.

Execution Contexts

Every device kernel has two execution contexts running concurrently on separate hardware resources: ctx.main and ctx.sub.

main runs the primary computation; sub runs a concurrent pipeline, typically used to prefetch operands into TRF or VRF while main computes.

If main needs operands that sub is still fetching, main automatically waits for sub’s execution to ensure synchronization.

Because both contexts share the flat on-chip SRAM, the programmer must explicitly assign DM addresses (e.g. the addr argument in .to_dm(), .commit()) to prevent tensors from overlapping.

Addresses must not collide, but they can be non-contiguous.

Examples

The first two examples cover element-wise operations by using the Vector Engine; the remaining three cover tensor contractions by using the Contraction Engine.

Constant Addition

The first kernel takes a vector of integers and adds the constant 1 to each element.

It uses one chip, one of two clusters, and all 256 slices in that cluster, with one 8-element group per slice.

The Vector Engine processes integers using fixed-point operations, so we use vector_fxp(FxpBinaryOp::AddFxp, 1) to add the constant value.

flowchart TB

HOST[Host] <-->|PCIe DMA| HBM[(HBM)]

HBM <-->|Tensor DMA| DM[(DM)]

subgraph TU[Tensor Unit]

direction TB

FE[Fetch] --> SW["Switch (Forward)"] --> CO[Collect] --> VE["Vector (AddFxp +1)"] --> CM[Commit]

end

DM -->|stream| FE

CM -->|stream| DM

This example demonstrates the full Tensor Unit pipeline.

to_dm moves data from HBM to DM, splitting the flat tensor across 256 slices.

The begin → fetch → collect → vector_init → vector_intra_slice_branch → vector_fxp → vector_final → commit chain processes each slice in one pass, and vector_fxp(FxpBinaryOp::AddFxp, 1) adds the integer constant 1 to every element in parallel across all 256 slices.

BranchMode::Unconditional configures the pipeline to execute on every cycle.

#![feature(register_tool)]

#![register_tool(tcp)]

extern crate furiosa_visa_std;

extern crate tokio;

extern crate rand;

use rand::SeedableRng;

use rand::rngs::SmallRng;

use furiosa_visa_std::prelude::*;

axes![A = 2048]; // declare named axis A with size 2048; used in all tensor types below

type Chip = m![1];

type Cluster = m![1 # 2]; // 1 active cluster; hardware has 2 per chip

type Slice = m![A / 8 # 256]; // distribute A across 256 slices, 8 elements each

#[tokio::main]

async fn main() {

let mut ctx = Context::acquire();

// Create input on the host and transfer to HBM

let mut rng = SmallRng::seed_from_u64(42);

let input = HostTensor::<i32, m![A]>::rand(&mut rng);

let in_hbm = input.to_hbm(&mut ctx.pdma, 0).await;

// Launch the device kernel

let out_hbm = launch(kernel, (&mut ctx, &in_hbm)).await;

// Transfer result back to host

let _out = out_hbm.to_host::<m![A]>(&mut ctx.pdma).await;

}

#[device(chip = 1)]

fn kernel(ctx: &mut Context, input: &HbmTensor<i32, Chip, m![A]>) -> HbmTensor<i32, Chip, m![A]> {

// HBM → DM: split 2048 elements across 256 slices (8 elements per slice)

let dm = input.to_dm::<Cluster, Slice, m![A % 8]>(&mut ctx.tdma, 0);

let result = ctx

.main

.begin(dm.view())

// Fetch: stream 8-element packets from DM into the pipeline

.fetch::<i32, m![1], m![A % 8]>()

// Collect: normalize the stream into 32-byte flits (8 × i32)

.collect::<m![1], m![A % 8]>()

// Vector Engine: enter pipeline and arm unconditionally

.vector_init()

.vector_intra_slice_branch(BranchMode::Unconditional)

// Add the scalar constant 1 to every element

.vector_fxp(FxpBinaryOp::AddFxp, 1)

// Exit VE and commit: write results back to DM

.vector_final()

.commit::<m![A % 8]>(1 << 12);

// DM → HBM

result.to_hbm(&mut ctx.tdma, 1 << 28)

}Elementwise Multiplication

The second kernel multiplies two same-shape vectors element-wise.

Because the Vector Engine’s fixed-point multiply unit (FxpMul) takes a second operand per element, that operand must come from the VRF (Vector Register File).

The VRF is a small per-slice register file that the Vector Engine reads every cycle; it is loaded in the sub context while the main computation streams.

flowchart TB

LHS_HBM[(lhs: HBM)] -->|Tensor DMA| LHS_DM[(lhs: DM)]

RHS_HBM[(rhs: HBM)] -->|Tensor DMA| RHS_DM[(rhs: DM)]

subgraph sub[sub context]

direction LR

sFE[Fetch] --> sSW[Switch] --> sCO[Collect]

end

subgraph main[main context]

direction LR

mFE[Fetch] --> mSW[Switch] --> mCO[Collect] --> VE["Vector (MulInt)"] --> CM[Commit]

end

RHS_DM --> sFE

LHS_DM --> mFE

sCO --> VRF[(VRF)]

VRF --> VE

CM --> OUT_DM[(result: DM)]

OUT_DM -->|Tensor DMA| OUT_HBM[(HBM)]

This example adds the VRF and the sub context.

rhs_dm is allocated at a different base address (1 << 12) to avoid overlapping with lhs_dm.

The sub context loads rhs_dm into the VRF through the Fetch → Switch → Collect → .to_vrf(0) pipeline.

The main context then streams lhs_dm and multiplies each element by its VRF counterpart using MulInt; the hardware runs both contexts concurrently where possible.

#![feature(register_tool)]

#![register_tool(tcp)]

extern crate furiosa_visa_std;

extern crate tokio;

extern crate rand;

use rand::SeedableRng;

use rand::rngs::SmallRng;

use furiosa_visa_std::prelude::*;

axes![A = 2048];

type Chip = m![1];

type Cluster = m![1 # 2];

type Slice = m![A / 8 # 256];

#[tokio::main]

async fn main() {

let mut ctx = Context::acquire();

let mut rng = SmallRng::seed_from_u64(42);

let lhs = HostTensor::<i32, m![A]>::rand(&mut rng);

let rhs = HostTensor::<i32, m![A]>::rand(&mut rng);

let lhs_hbm = lhs.to_hbm(&mut ctx.pdma, 0).await;

let rhs_hbm = rhs.to_hbm(&mut ctx.pdma, 1 << 28).await;

let out_hbm = launch(kernel, (&mut ctx, &lhs_hbm, &rhs_hbm)).await;

let _out = out_hbm.to_host::<m![A]>(&mut ctx.pdma).await;

}

#[device(chip = 1)]

fn kernel(

ctx: &mut Context,

lhs: &HbmTensor<i32, Chip, m![A]>,

rhs: &HbmTensor<i32, Chip, m![A]>,

) -> HbmTensor<i32, Chip, m![A]> {

// Move both operands from HBM to DM; use distinct base addresses to avoid overlap

let lhs_dm = lhs.to_dm::<Cluster, Slice, m![A % 8]>(&mut ctx.tdma, 0);

let rhs_dm = rhs.to_dm::<Cluster, Slice, m![A % 8]>(&mut ctx.tdma, 1 << 12);

// Sub context: load rhs into VRF (runs concurrently with the main context below).

// VRF holds a per-slice operand that the Vector Engine reads every cycle.

let rhs_vrf: VrfTensor<i32, Chip, Cluster, Slice, m![A % 8]> = ctx

.sub

.begin(rhs_dm.view())

.fetch::<i32, m![1], m![A % 8]>()

.collect::<m![A % 8 / 8], m![A % 8 % 8]>()

.to_vrf(0);

// Main context: multiply every lhs element by its rhs counterpart from VRF

let result = ctx

.main

.begin(lhs_dm.view())

.fetch::<i32, m![1], m![A % 8]>()

.collect::<m![1], m![A % 8]>()

.vector_init()

.vector_intra_slice_branch(BranchMode::Unconditional)

// Each slice multiplies its 8 lhs elements by the matching 8 rhs elements in VRF

.vector_fxp(FxpBinaryOp::MulInt, &rhs_vrf)

.vector_final()

.commit::<m![A % 8]>(1 << 13);

result.to_hbm(&mut ctx.tdma, 1 << 28)

}The following three examples implement the contractions from the table above, each introducing a different Switch Engine topology.

Dot Product

The dot product \(I, I \rightarrow 1\) is the simplest contraction: there is no broadcast step, and both operands reduce along the same axis.

The sub context loads rhs into the TRF, the on-chip register file that holds one operand stationary while the other streams through, via Fetch → Collect → .to_trf().

TrfAddress::Full dedicates the entire TRF to this tensor.

.align() pairs the streaming LHS flits with the stationary RHS, doubling the packet width.

.contract() multiplies and reduce-adds along A spatially via the hardware reduction tree; .accumulate() then performs temporal accumulation across the time axis, producing a scalar per slice; .cast() converts the f32 accumulator output back to bf16.

#![feature(register_tool)]

#![register_tool(tcp)]

extern crate furiosa_visa_std;

extern crate tokio;

extern crate rand;

use rand::SeedableRng;

use rand::rngs::SmallRng;

use furiosa_visa_std::prelude::*;

axes![A = 2048];

type Chip = m![1];

type Cluster = m![1 # 2];

type Slice = m![1 # 256]; // 1 active slice; m![A / 8 # 256] would distribute across all 256

type Time = m![1]; // No temporal iteration

type Row = m![1]; // No row parallelism

#[tokio::main]

async fn main() {

let mut ctx = Context::acquire();

let mut rng = SmallRng::seed_from_u64(42);

let lhs = HostTensor::<bf16, m![A]>::rand(&mut rng);

let rhs = HostTensor::<bf16, m![A]>::rand(&mut rng);

let lhs_hbm = lhs.to_hbm(&mut ctx.pdma, 0).await;

let rhs_hbm = rhs.to_hbm(&mut ctx.pdma, 1 << 28).await;

let out_hbm = launch(kernel, (&mut ctx, &lhs_hbm, &rhs_hbm)).await;

let out = out_hbm.to_host::<m![1]>(&mut ctx.pdma).await;

}

#[device(chip = 1)]

fn kernel(

ctx: &mut Context,

lhs: &HbmTensor<bf16, Chip, m![A]>,

rhs: &HbmTensor<bf16, Chip, m![A]>,

) -> HbmTensor<bf16, Chip, m![1]> {

// HBM → DM

let lhs: DmTensor<bf16, Chip, Cluster, Slice, m![A]> = lhs.to_dm(&mut ctx.tdma, 0);

let rhs: DmTensor<bf16, Chip, Cluster, Slice, m![A]> = rhs.to_dm(&mut ctx.tdma, 1 << 12);

// Sub context: load rhs into TRF (TrfAddress::Full dedicates the entire TRF to this tensor)

let rhs: TrfTensor<bf16, Chip, Cluster, Slice, Row, m![A]> = ctx

.sub

.begin(rhs.view())

.fetch::<bf16, Time, m![A]>()

.collect::<m![{Time}, A / 16], m![A % 16]>()

.to_trf(TrfAddress::Full);

// Main context: stream lhs through the Contraction Engine, reduce along A

let result: DmTensor<bf16, Chip, Cluster, Slice, m![1 # 8]> = ctx

.main

.begin(lhs.view())

.fetch::<bf16, Time, m![A]>()

.collect::<m![A / 16], m![A % 16]>()

// Pair consecutive 32-byte flits into 64-byte packets, halving time steps (A/16 → A/32)

.align::<m![A / 32], m![A % 32], _, _>(&rhs)

.contract::<m![1]>()

.accumulate::<m![1], m![1 # 8]>(AccumulationKind::Interleaved)

.cast::<bf16, m![1 # 16]>() // cast f32 accumulator output back to bf16

.commit::<m![1 # 8]>(1 << 13);

// DM → HBM

result.to_hbm(&mut ctx.tdma, 2 << 28)

}The dot product reduces along a single axis with no redistribution needed, so the Switch Engine is skipped and collect() is called directly on the FetchTensor.

The pseudocode below describes this behavior:

#![allow(unused)]

fn main() {

#![feature(adt_const_params)]

extern crate furiosa_visa_std;

use furiosa_visa_std::prelude::*;

axes![A = 2048];

// Dot product: both operands reduce along A; no slice redistribution needed.

fn collect_dot_product<'l, const T: Tu>(

input: FetchTensor<'l, T, bf16, m![1], m![1], m![1 # 256], m![1], m![A]>,

) -> CollectTensor<'l, T, bf16, m![1], m![1], m![1 # 256], m![A / 16], m![A % 16]> {

input.collect()

}

}GEMV

GEMV \(IJ, J \rightarrow I\) extends the dot product by requiring the Switch Engine (which redistributes data across slices between Fetch and Collect) to broadcast the vector across all I slices, so each slice can independently compute its row of the output \(y_i = \sum_j A_{ij} x_j\).

The reduced dimension J splits into Time (one iteration per tile) and Packet (elements within each tile).

The preserved output dimension I maps to Slice, distributing output elements across slices for spatial parallelism.

GEMV requires broadcasting the vector to all I slices, which the Switch Engine handles with Broadcast01.

The pseudocode below describes this behavior:

#![allow(unused)]

fn main() {

#![feature(adt_const_params)]

extern crate furiosa_visa_std;

use furiosa_visa_std::prelude::*;

axes![I = 256, J = 2048];

// GEMV: broadcast the vector across all I slices.

fn switch_gemv<'l, const T: Tu>(

input: FetchTensor<'l, T, bf16, m![1], m![1], m![1 # 256], m![1], m![J]>,

) -> SwitchTensor<'l, T, bf16, m![1], m![1], m![I], m![1 # 256], m![J]> {

input.switch(SwitchConfig::Broadcast01 {

slice1: 256,

slice0: 1,

time0: 1,

})

}

}#![allow(unused)]

fn main() {

#![feature(register_tool)]

#![register_tool(tcp)]

extern crate furiosa_visa_std;

extern crate rand;

use rand::SeedableRng;

use rand::rngs::SmallRng;

use furiosa_visa_std::prelude::*;

axes![I = 256, J = 2048];

type Chip = m![1];

type Cluster = m![1 # 2];

type Slice = m![I]; // Distribute output dimension across slices

type Time = m![J / 32]; // Temporal iterations for reduction dimension

type Packet = m![J % 32]; // Packet size for reduction dimension

type Row = m![1];

async fn run() {

let mut ctx = Context::acquire();

// Create matrix and vector on host

let mut rng = SmallRng::seed_from_u64(42);

let matrix = HostTensor::<bf16, m![I, J]>::rand(&mut rng);

let vector = HostTensor::<bf16, m![J]>::rand(&mut rng);

// Transfer to HBM

let matrix_hbm = matrix.to_hbm(&mut ctx.pdma, 0 << 28).await;

let vector_hbm = vector.to_hbm(&mut ctx.pdma, 1 << 28).await;

// Launch kernel

let out_hbm = launch(kernel, (&mut ctx, &matrix_hbm, &vector_hbm)).await;

// Transfer result back

// > TODO(jeongmin.park): Consider adding a type annotation here.

let out = out_hbm.to_host::<m![I]>(&mut ctx.pdma).await;

}

#[device(chip = 1)]

fn kernel(

ctx: &mut Context,

matrix: &HbmTensor<bf16, Chip, m![I, J]>,

vector: &HbmTensor<bf16, Chip, m![J]>,

) -> HbmTensor<bf16, Chip, m![I]> {

// Move data from HBM to DM

let matrix: DmTensor<bf16, Chip, Cluster, Slice, m![J]> = matrix.to_dm(&mut ctx.tdma, 0);

let vector: DmTensor<bf16, Chip, Cluster, Slice, m![J]> = vector.to_dm(&mut ctx.tdma, 1 << 12);

// Load vector into TRF

// The Switch Engine automatically broadcasts the vector to all `I` slices

let vector_trf: TrfTensor<bf16, Chip, Cluster, Slice, Row, m![J]> = ctx

.sub

.begin(vector.view())

.fetch::<bf16, m![1], m![J]>()

// Collect Engine: split into 32-byte flits.

.collect::<m![J / 16], m![J % 16]>()

.to_trf(TrfAddress::Full);

// Compute GEMV: matrix × vector

// Key difference: `I` maps to slice (preserved), `J` gets reduced

let result: DmTensor<bf16, Chip, Cluster, Slice, m![1]> = ctx

.main

.begin(matrix.view())

.fetch::<bf16, Time, Packet>()

.collect::<Time, Packet>()

.align::<Time, Packet, _, _>(&vector_trf)

.contract::<m![1]>()

.accumulate::<m![1], m![1 # 8]>(AccumulationKind::Interleaved)

.cast::<bf16, m![1 # 16]>()

.commit(0);

// Transfer result to HBM

result.to_hbm(&mut ctx.tdma, 2 << 28)

}

}GEMM

GEMM \(IK, JK \rightarrow IJ\) computes \(C_{ij} = \sum_k A_{ik} B_{jk}\). Each matrix broadcasts along its missing output dimension: \(A\) broadcasts across \(J\), \(B\) broadcasts across \(I\). Then the shared dimension \(K\) is reduced.

The main change from the GEMV example is that two output dimensions \(I\) and \(J\) are jointly mapped to Slice, so each slice computes a 2D tile of the output matrix.

The slice mapping now covers both dimensions, and the contraction output preserves both.

This example introduces type Slice = m![I / 32, J / 32], which decomposes two output dimensions jointly and assigns each slice a 16 × 16 output tile.

The Switch Engine distributes each tile of B to the matching slice, so each slice sees only its portion of J.

.contract::<m![1]>() reduces along K spatially, and .accumulate::<m![I], m![J # 8]>(AccumulationKind::Interleaved) accumulates over time, preserving both I and J in the output.

#![allow(unused)]

fn main() {

#![feature(register_tool)]

#![register_tool(tcp)]

extern crate furiosa_visa_std;

use furiosa_visa_std::prelude::*;

axes![I = 512, J = 512, K = 2048];

type Chip = m![1];

type Cluster = m![1 # 2];

// Distribute output dimensions `I` and `J` across slices

type Slice = m![I / 32, J / 32]; // Each slice handles a 16 × 16 output tile

type Row = m![J % 8];

// Host code similar to previous examples:

// - Create matrix tensors A and B

// - Transfer to HBM

// - Launch kernel

// - Transfer result back

#[device(chip = 1)]

fn kernel(

ctx: &mut Context,

a: &HbmTensor<bf16, Chip, m![I, K]>,

b: &HbmTensor<bf16, Chip, m![K, J]>,

) -> HbmTensor<bf16, Chip, m![I, J]> {

// Move data from HBM to DM

let a: DmTensor<bf16, Chip, Cluster, Slice, m![I % 32, K]> = a.to_dm(&mut ctx.tdma, 0);

let b: DmTensor<bf16, Chip, Cluster, Slice, m![J % 32, K]> = b.to_dm(&mut ctx.tdma, 1 << 12);

// Load matrix B into TRF

// Switch Engine distributes B across 256 slices

// Each slice gets the full `K` dimension but only its (16 × 16) output tile

// See: Switch Engine topologies for details on distribution

let b_trf: TrfTensor<bf16, Chip, Cluster, Slice, Row, m![J / 8 % 4, K]> = ctx

.sub

.begin(b.view())

.fetch::<bf16, m![J % 8, J / 8 % 4], m![K]>()

.collect::<m![J % 8, J / 8 % 4, K / 16], m![K % 16]>()

.to_trf(TrfAddress::Full);

// Compute GEMM: A × B

// Switch Engine ensures matching (`I / 32`, `J / 32`) slice distribution

// Contraction reduces along `K`, preserves `I` and `J`

let result: DmTensor<bf16, Chip, Cluster, Slice, m![I % 32, J % 32]> = ctx

.main

.begin(a.view())

.fetch::<bf16, m![I % 32, J / 8 % 4], m![K]>()

.collect::<m![I % 32, J / 8 % 4, K / 16], m![K % 16]>()

.align::<m![I % 32, J / 8 % 4, K / 32], m![K % 32], _, _>(&b_trf)

.contract::<m![1]>()

.accumulate::<m![I % 32, J / 8 % 4], m![J % 8]>(AccumulationKind::Interleaved)

.cast::<bf16, m![J % 8 # 16]>()

.commit(0);

// Transfer result to HBM

result.to_hbm(&mut ctx.tdma, 2 << 28)

}

}Blocked GEMM

Note

This section is a work in progress. A complete example extending GEMM with blocking (tiling) for matrices that exceed on-chip DM capacity — covering temporal partitioning over the

Kdimension and spatial partitioning that distributesIandJtiles across multiple chips — will be added in a future release.

Flash Attention

Note

This section is a work in progress. A complete flash attention example combining GEMM-style contraction, softmax (Vector Engine), and the multi-pass main/sub prefetch pattern across a full transformer attention head will be added in a future release.

Together, the five complete examples above demonstrate every major hardware engine in TCP: the DMA engines (HBM to DM), the Vector Engine (element-wise ops), the Contraction Engine (multiply-reduce), and the Switch Engine (data redistribution across slices). (The Blocked GEMM and Flash Attention sections are stubs and will demonstrate additional patterns once complete.)

Further Reading

The examples above process tensors that fit in a single hardware pass. Real workloads often exceed the 512 KB/slice DM capacity and require partitioning into tiles. The next chapters cover two complementary strategies: temporal partitioning, which processes tiles sequentially over time, and spatial partitioning, which distributes tiles across parallel hardware units.

Each construct introduced in this chapter is covered in depth in the reference chapters:

axes![],m![],HbmTensor,DmTensor→ Mapping Tensors.to_dm(),.to_hbm(),.fetch(),.commit()→ Moving Tensors.contract(),.accumulate(),.cast(),.switch(),.vector_fxp()→ Computing Tensorsctx.main,ctx.sub,launch()→ Scheduling- End-to-end kernels combining all of the above → Kernel Examples

Mapping Tensors

This chapter explains what mappings are, how to declare them in TCP’s Virtual ISA, and how to choose them for performance.

Layout and Performance

Tensors have no intrinsic order of elements. A mapping is a function from tensor indices to buffer positions, which defines the order in which elements are stored. When storing a tensor in hardware, you need to decide how they will be mapped into the flat buffer.

The choice of mapping matters because hardware reads memory in contiguous blocks: elements stored far apart require more memory transfers. For example, one can choose which axis is major (outermost, changes slowest) and which is minor (innermost, changes fastest, stored contiguously). Changing a layout after allocation requires copying and transposing data, so the mapping chosen at allocation time constrains all subsequent operations to match that layout.

Consider a tensor with axes H (height, 6 rows) and W (width, 8 columns). The same tensor admits different mappings, each with different performance characteristics.

| H\W | 0 | 1 | 2 | 3 | 4 | 5 | 6 | 7 |

|---|---|---|---|---|---|---|---|---|

| 0 | a | b | c | d | e | f | g | h |

| 1 | i | j | k | l | m | n | o | p |

| 2 | · | · | · | · | · | · | · | · |

| 3 | · | · | · | · | · | · | · | · |

| 4 | · | · | · | · | · | · | · | · |

| 5 | · | · | · | · | · | · | · | · |

H Major, W Minor

A scan along W is contiguous; a scan along H accesses one element per cache line.

| H=0 | H=1 | ... | ||||||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| a | b | c | d | e | f | g | h | i | j | k | l | m | n | o | p | ... |

W Major, H Minor

A scan along H is contiguous; a scan along W accesses one element per cache line.

| W=0 | W=1 | W=2 | ... | |||||||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| a | i | · | · | · | · | b | j | · | · | · | · | c | k | · | · | · | · | ... |

Either choice sacrifices spatial locality (the property that nearby elements are stored at nearby addresses) in one direction. Tiling achieves good locality along both axes by grouping nearby H and W indices into 2D tiles.

2×2 Tiles

All elements within a tile are contiguous.

| t(0,0) | t(0,1) | t(0,2) | ... | |||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|

| a | b | i | j | c | d | k | l | e | f | m | n | ... |

W-minor layout is fast along W but slow along H; H-minor layout is the reverse; tiling gives balanced locality in both directions at the cost of more complex address calculation.

The outer dimension of a decomposition can become a hardware time loop; the inner dimension, a parallel lane.

Choosing a decomposition is how programmers control which dimensions execute sequentially and which execute in parallel.

TCP names these hardware dimensions Time (the sequential loop counter) and Packet (the parallel data lane width), used throughout this book; Memory and Stream explains how decompositions map to them.

The Declarative Approach

Virtual ISA lets the programmer declare a mapping in terms of logical axes; the compiler derives physical placement, alignment, and hardware scheduling.

In the above example, the simplest form is m![H, W] for H-major and m![W, H] for W-major, where the leftmost axis is major and the rightmost is minor.

Decomposing axes further with / and % enables tiling, expressed as m![H / 2, W / 2, H % 2, W % 2]: the first two dimensions are the tile indices and the last two are positions within the tile.

Declarative mappings offer three benefits:

- Expressiveness: Layout is stated in terms of logical axes (

m![H, W]), not raw memory strides or offsets. - Correctness: The compiler normalizes mapping expressions to canonical form and verifies them symbolically, turning layout properties into compile-time invariants.

- Portability: The same expression targets CPUs, GPUs, and TCPs without rewrites; the compiler derives hardware-specific placement from the axis description.

Mapping expressions describe a tensor at every stage of its life, not only when it is at rest in memory. The same tensor can be stored in HBM, loaded into DM with a different layout, and streamed through the pipeline as packets; each stage holds the same mathematical values under a different mapping.

This unified view treats data movement as preserving the mathematical tensor: moving a tensor between stages changes only its physical representation, not its values. The Tensor Functions page formalizes this perspective and shows how it makes data movement composable with computation in the same pipeline.

Mapping Expressions

A mapping expression defines where each tensor element sits in a buffer. This page covers the available mapping constructors and the equivalences between mappings.

Consider a tensor with axes![A = 8, B = 512].

The mapping expression m![A, B] places \(A\) as the major axis and \(B\) as the minor axis, requiring a buffer of 8 × 512 = 4096 elements.

Buffer position 0 holds \(\{A=0, B=0\}\), position 1 holds \(\{A=0, B=1\}\), and so on through all 512 elements where \(A=0\) before moving to \(A=1\).

Axis Sizes

The axes! macro declares axis identifiers and their sizes.

Throughout this section, assume the following axis sizes.

#![allow(unused)]

fn main() {

extern crate furiosa_visa_std;

use furiosa_visa_std::prelude::*;

axes![A = 8, B = 512];

}Mapping Interface

A mapping expression like m![H, W] is a Rust type that describes how tensor indices map to buffer positions.

Every mapping expression implements the M trait, which provides the buffer size and a buffer-index-to-tensor-index mapping function:

#![allow(unused)]

fn main() {

// Inside `furiosa_visa_std::prelude`...

extern crate furiosa_visa_std;

use furiosa_visa_std::prelude::*;

use std::fmt::Debug;

/// Tensor index: a map from axis identifiers to coordinate values.

pub struct Index { /* ... */ }

/// Constructs tensor indices.

/// `i![A: 2, B: 3]` creates an `Index` with A = 2 and B = 3.

macro_rules! i {

() => {};

/* ... */

}

}A mapping defines what mathematical tensor a buffer represents.

For example, HostTensor<bf16, m![A, B]> denotes a host memory buffer containing m![A, B]::SIZE elements of bf16 data, which is 4096 elements.

We say a buffer holds a tensor \(T\) when:

- For every buffer index

iand tensor indexti, - if

m![A, B]::map(i) = Some(ti), - then the

i-th element of the buffer stores the value of tensor \(T\) at indexti.

Constructors

Mapping expressions are built by composing small constructors, each of which transforms or combines simpler mappings.

These expressions use arithmetic-like operators (/, %, and # for padding) to concisely define the mapping between tensor and linear buffer indices.

Symbol

A symbol is a single uppercase letter whose size comes from the shape declaration.

The mapping m![A] maps 8 buffer indices linearly to tensor indices along the axis: buffer index 0 holds i![] (empty tensor index), index 1 holds i![A: 1], index 2 holds i![A: 2], and so on:

#![allow(unused)]

fn main() {

extern crate furiosa_visa_std;

use furiosa_visa_std::prelude::*;

axes![A = 8];

type E = m![A]; // Symbol<Ident::A, 8>

#[test]

fn test_symbol() {

for i in 0..E::SIZE {

assert_eq!(E::map(i), Some(i![A: i]));

}

assert_eq!(E::map(E::SIZE), None);

}

}// Trait implementation

impl<S: AxisName> M for Symbol<S> {

const SIZE: usize = S::SIZE;

fn to_value() -> Mapping {

Mapping::Symbol {

symbol: S::NAME,

size: S::SIZE,

}

}

fn map(i: usize) -> Option<Index> {

if i < S::SIZE {

let mut index = Index::new();

Index::add_term(

&mut index,

Term {

inner: Atom::Symbol {

symbol: S::NAME,

size: S::SIZE,

},

stride: 1,

modulo: S::SIZE,

},

i,

);

Some(index)

} else {

None

}

}

}Note

For every symbol

A, the 0’th indexi![A: 0]corresponds to the empty tensor indexi![].

Pair

One way to store a 2D tensor with shape \(\{A=8, B=512\}\) is the pair mapping m![A, B].

This creates a buffer of 4096 elements where A is the major axis and B is the minor axis.

The first 512 elements hold A = 0 and the next 512 elements hold A = 1.

Buffer index 519 holds i![A: 1, B: 7] since 519 == 512 * 1 + 7.

The mapping Pair<L, R> maps the Cartesian product of two spaces into a linear buffer where L is the major dimension and R is the minor dimension.

The size is L::SIZE * R::SIZE, and the mapping uses floor division and modulo to decompose indices.

m![A, B, C, D] expands to Pair<A, Pair<B, Pair<C, D>>> and is right-associative.

#![allow(unused)]

fn main() {

extern crate furiosa_visa_std;

use furiosa_visa_std::prelude::*;

axes![A = 8, B = 512];

type E = m![A, B]; // Pair<m![A], m![B]>

#[test]

fn test_pair() {

for i in 0..E::SIZE {

assert_eq!(E::map(i), Some(i![A: i / <m![B]>::SIZE, B: i % <m![B]>::SIZE]));

}

assert_eq!(E::map(2 * <m![B]>::SIZE + 7), Some(i![A: 2, B: 7]));

assert_eq!(E::map(E::SIZE), None);

}

}// Trait implementation

impl<L, R> M for Pair<L, R>

where

L: M,

R: M,

{

const SIZE: usize = L::SIZE * R::SIZE;

fn to_value() -> Mapping {

Mapping::Pair {

left: RBox::new(L::to_value()),

right: RBox::new(R::to_value()),

}

}

fn map(i: usize) -> Option<Index> {

let mut l = L::map(i / R::SIZE)?;

let r = R::map(i % R::SIZE)?;

Index::add(&mut l, r);

Some(l)

}

}Identity

The identity mapping m![1] creates a single-element buffer that maps buffer index 0 to the empty tensor index i![].

It serves as the identity element for Pair: m![1, A] and m![A, 1] are both equivalent to m![A].

#![allow(unused)]

fn main() {

extern crate furiosa_visa_std;

use furiosa_visa_std::prelude::*;

type E = m![1]; // Identity

#[test]

fn test_identity() {

assert_eq!(E::map(0), Some(i![]));

assert_eq!(E::map(1), None);

}

}// Trait implementation

impl M for Identity {

const SIZE: usize = 1;

fn to_value() -> Mapping {

Mapping::Identity

}

fn map(i: usize) -> Option<Index> {

if i == 0 { Some(Index::new()) } else { None }

}

}Padding

Padding aligns data to hardware requirements by adding unused buffer space.

For example, the DMA engine requires rows to start on 64-byte boundaries.

With axes![C = 13, D = 61], m![C, D] creates misaligned rows since 61 is not divisible by 64.

m![C, D # 64] fixes this by aligning each row to 64-byte boundaries, using 3 extra elements per row.

#![allow(unused)]

fn main() {

extern crate furiosa_visa_std;

use furiosa_visa_std::prelude::*;

axes![C = 13, D = 61];

type E = m![C, D # 64]; // Pair<m![C], Padding<m![D], 64>>

#[test]

fn test_padding() {

assert_eq!(E::map(0), Some(i![C: 0, D: 0]));

assert_eq!(E::map(60), Some(i![C: 0, D: 60]));

assert_eq!(E::map(61), None); // padding

assert_eq!(E::map(62), None); // padding

assert_eq!(E::map(63), None); // padding

assert_eq!(E::map(64), Some(i![C: 1, D: 0]));

}

}// Trait implementation

impl<L, const SIZE: usize> M for Padding<L, SIZE>

where

L: M,

{

const SIZE: usize = SIZE;

fn to_value() -> Mapping {

Mapping::Padding {

inner: RBox::new(L::to_value()),

padding: SIZE,

kind: PaddingKind::Top,

}

}

fn map(i: usize) -> Option<Index> {

L::map(i)

}

}Resize

Resize constrains a mapping to a smaller logical size by truncating indices beyond the new size, discarding elements outside that range. Unlike padding, which expands the buffer, Resize shrinks the logical view.

The mapping m![D = 2] takes only the first 2 elements of axis D, producing indices D = 0 and D = 1.

#![allow(unused)]

fn main() {

extern crate furiosa_visa_std;

use furiosa_visa_std::prelude::*;

axes![C = 2, D = 3];

type E = m![C, D = 2]; // Pair<m![C], Resize<m![D], 2>>

#[test]

fn test_resize() {

assert_eq!(E::map(0), Some(i![C: 0, D: 0]));

assert_eq!(E::map(1), Some(i![C: 0, D: 1]));

assert_eq!(E::map(2), Some(i![C: 1, D: 0]));

assert_eq!(E::map(3), Some(i![C: 1, D: 1]));

assert_eq!(E::map(4), None);

}

}// Trait implementation

impl<L, const SIZE: usize> M for Resize<L, SIZE>

where

L: M,

{

const SIZE: usize = SIZE;

fn to_value() -> Mapping {

Mapping::Resize {

inner: RBox::new(L::to_value()),

resize: SIZE,

}

}

fn map(i: usize) -> Option<Index> {

if i < SIZE { L::map(i) } else { None }

}

}Tiling is implemented through indexed views, pure metadata transformations without data copies.

The .tile() method extracts a tile by resizing one dimension to the tile size and offsetting into the buffer.

#![allow(unused)]

fn main() {

extern crate furiosa_visa_std;

use furiosa_visa_std::prelude::*;

axes![A = 8, B = 512];

fn tiles() {

let tensor = unsafe { HbmTensor::<bf16, m![1], m![A, B]>::from_addr(0) };

let view = tensor.view(); // HbmTensorView::<'_, bf16, m![1], m![A, B]>

let tile01 = view.tile::<m![B], 2, m![A, B = 2 # 4]>(0); // HbmTensorView::<'_, bf16, m![1], m![A, B = 2 # 4]>

let tile23 = view.tile::<m![B], 2, m![A, B = 2 # 4]>(2); // HbmTensorView::<'_, bf16, m![1], m![A, B = 2 # 4]>

}

}The .tile() method takes three type parameters and one value parameter.

- The tile dimension

m![B]specifies which dimension to divide along. - The tile size

2specifies the number of elements per tile. - The tile mapping

m![A, B = 2 # 4]defines the resulting view’s mapping. The mappingB = 2 # 4signifies that dimensionBhas a logical size of2within the view but exists within a physical footprint of4. This is essential for preserving the original memory layout and stride calculations. - The starting index specifies which tile to extract. Passing

0captures the range0..2fortile01, while passing2captures the range2..4fortile23.

Stride and Modulo

Stride (/) and modulo (%) decompose a single dimension into two: the outer (block index) and the inner (position within block).

Consider the 512-element axis B divided into 8 blocks of 64 elements each.

The mapping m![B / 64, B % 64] creates an 8 × 64 grid where the first dimension selects which block and the second dimension selects the position within that block.

Buffer index 130 corresponds to block 2 at position 2 within that block, giving tensor index B = 64 × 2 + 2 = 130, equal to the flat-buffer result (since m![B / 64, B % 64] is equivalent to m![B]):

#![allow(unused)]

fn main() {

extern crate furiosa_visa_std;

use furiosa_visa_std::prelude::*;

axes![A = 8, B = 512];

type D1 = m![B / 64]; // stride with size 8

type D2 = m![B % 64]; // modulo with size 64

type E = m![B / 64, B % 64]; // equivalent to `m![B]`

#[test]

fn test_stride_modulo() {

for i in 0..8 {

assert_eq!(D1::map(i), Some(i![B / 64: i]));

}

assert_eq!(D1::map(8), None);

for j in 0..64 {

assert_eq!(D2::map(j), Some(i![B % 64: j]));

}

assert_eq!(D2::map(64), None);

for i in 0..8 {

for j in 0..64 {

assert_eq!(

E::map(64 * i + j), // i![B / 64: i, B % 64: j]

<m![B]>::map(64 * i + j), // equivalent to above

);

}

}

assert_eq!(E::map(512), None);

}

}// Trait implementation

impl<L, const SIZE: usize> M for Stride<L, SIZE>

where

L: M,

{

const SIZE: usize = {

assert!(L::SIZE % SIZE == 0, "Stride size must divide the original size");

L::SIZE / SIZE

};

fn to_value() -> Mapping {

Mapping::Stride {

inner: RBox::new(L::to_value()),

stride: SIZE,

}

}

fn map(i: usize) -> Option<Index> {

if i < Self::SIZE { L::map(i * SIZE) } else { None }

}

}

impl<L, const SIZE: usize> M for Modulo<L, SIZE>

where

L: M,

{

const SIZE: usize = {

assert!(L::SIZE % SIZE == 0, "Modulo size must divide the original size");

SIZE

};

fn to_value() -> Mapping {

Mapping::Modulo {

inner: RBox::new(L::to_value()),

modulo: SIZE,

}

}

fn map(i: usize) -> Option<Index> {

if i < Self::SIZE { L::map(i % L::SIZE) } else { None }

}

}Together, m![B / 64, B % 64] transforms axis B into an 8 × 64 grid.

The mapping is equivalent to m![B] but expresses a different logical view of the same data, revealing block structure hidden in the flat representation.

Stride and modulo mappings can be visualized in tabular form. Consider the mapping m![B / 4, B % 4] with B::SIZE = 16. The following table shows how buffer indices are arranged: each row corresponds to a specific index of B / 4 (the stride axis), and each column corresponds to an index of B % 4 (the modulo axis):

i![B % 4: 0] | i![B % 4: 1] | i![B % 4: 2] | i![B % 4: 3] | |

|---|---|---|---|---|

i![B / 4: 0] | i![B: 0] | i![B: 1] | i![B: 2] | i![B: 3] |

i![B / 4: 1] | i![B: 4] | i![B: 5] | i![B: 6] | i![B: 7] |

i![B / 4: 2] | i![B: 8] | i![B: 9] | i![B: 10] | i![B: 11] |

i![B / 4: 3] | i![B: 12] | i![B: 13] | i![B: 14] | i![B: 15] |

Stride and modulo factorize a single mapping into multiple dimensions.

The expression m![B / n] creates an outer dimension indexing blocks of size n.

The expression m![B % n] creates an inner dimension indexing positions within each block.

Modulo differs from resize in how it handles buffer size:

- Resize shrinks the buffer by truncating indices beyond the new size.

- Modulo preserves the original buffer size while partitioning it into equal-sized blocks.

These operations can be nested for complex decompositions.

The following example splits B into three dimensions where the buffer’s bit layout differs from that of the tensor index.

#![allow(unused)]

fn main() {

extern crate furiosa_visa_std;

use furiosa_visa_std::prelude::*;

axes![A = 8, B = 512];

// B's bits: 6 - 8, 0 - 4, 5

// Values: 0 - 7, 0 - 31, 0 - 1

type E = m![B / 64, B % 32, B / 32 % 2];

#[test]

fn test_nested_stride() {

for i in 0..8 {

for j in 0..32 {

for k in 0..2 {

assert_eq!(

E::map(64 * i + 2 * j + k),

Some(i![B: 64 * i + j + 32 * k]),

);

}

}

}

assert_eq!(E::map(512), None);

}

}The buffer index decomposes as 64 * i + 2 * j + k where i selects the block, j selects position within the block, and k selects the sub-block.

The tensor index B reconstructs as 64 * i + j + 32 * k, which rearranges the bit positions.

For example, buffer index 67 maps to B = 97:

- Buffer

67 = 64 * 1 + 2 * 1 + 1givesi=1, j=1, k=1 - Tensor

B = 64 * 1 + 1 + 32 * 1 = 97 - Verify:

97 / 64 = 1,97 % 32 = 1,(97 / 32) % 2 = 1

This kind of bit rearrangement maps naturally to hardware memory layouts where address bits are reordered for bank interleaving or cache efficiency.

In binary, this rearranges bit positions: buffer 001_00001_1 becomes B = 001_1_00001.

The buffer groups bits as [8:6]_[5:1]_[0] while B groups them as [8:6]_[5]_[4:0].

Tiling can operate on blocks rather than individual elements.

The following example tiles by block using m![B / 32] and creates overlapping tiles:

#![allow(unused)]

fn main() {

extern crate furiosa_visa_std;

use furiosa_visa_std::prelude::*;

axes![A = 8, B = 512];

let tensor = unsafe { HbmTensor::<bf16, m![1], m![A, B]>::from_addr(0) };

for i in 0..15 {

let tile = tensor.view().tile::<m![B / 32], 2, m![A, B / 32 = 2 # 16, B % 32]>(i);

}

}With B = 512, the dimension B / 32 has 16 blocks numbered 0-15.

Each tile takes 2 consecutive blocks starting at index i.

Tile 0 covers blocks {0, 1}, tile 1 covers blocks {1, 2}, and so on through tile 14 covering blocks {14, 15}.

These tiles overlap because consecutive tiles share one block: tiles 0 and 1 both include block 1.

The tile mapping B / 32 = 2 resizes the block dimension to 2 since each tile contains exactly 2 blocks.

When tiling with a single block, B / 32 = 1 simplifies to the identity m![1] since the dimension has only one value.

Escape

For complex mappings, define type aliases and reference them using { ... }.

With separate mappings L = m![A] and R = m![B], combining them as m![{ L }, { R }] produces the same result as m![A, B]:

#![allow(unused)]

fn main() {

extern crate furiosa_visa_std;

use furiosa_visa_std::prelude::*;

axes![A = 8, B = 512];

type L = m![A];

type R = m![B];

type E = m![{ L }, { R }]; // equivalent to `m![A, B]`

}This escape syntax breaks down complex mappings into named, reusable components.

Advanced Constructors

Skewed Axis

A skewed axis creates a diagonal access pattern across two dimensions.

Skewed axes introduce derived axis labels defined by arithmetic differences between existing axes; for example, B' = B - A defines a new axis B' whose coordinate at any point equals B minus A.

Algorithms that process data along diagonals use this pattern, such as certain wavefront computations.

The expression m![A, B' = 4] with B' = B - A creates a mapping where each row is shifted relative to the previous one.

The = operator specifies the logical size after skewing. The result wraps around using modular arithmetic.

For example, with axes![A = 4, B = 4] and B' = B - A:

| (A, B’) | (A, B) |

|---|---|

| (0, 0) | (0, 0) |

| (0, 1) | (0, 1) |

| (0, 2) | (0, 2) |

| (0, 3) | (0, 3) |

| (1, 0) | (1, 1) |

| (1, 1) | (1, 2) |

| (1, 2) | (1, 3) |

| (1, 3) | (1, 0) |

When A = 1 and B' = 3, the original B coordinate wraps to 0 via modular arithmetic since B = (B' + A) % 4 = (3 + 1) % 4 = 0.

Indirect Sequencing

TODO: Document indirect sequencing patterns for non-contiguous memory access. This advanced constructor provides index-based memory access where the sequence of buffer positions is determined by an indirection table rather than a mathematical formula.

Sliding (Linear Combination)

Note

Linear combination expressions

$(e1:n1, ..., ed:nd)combine multiple dimensions with specified strides. Formal definition:size_S($(e1:n1, ..., ed:nd)) = 1 + sum_k((size_S(ek) - 1) * nk). The mappingS, $(e1:n1, ..., ed:nd) |- si ~ tiholds if there existsi1...sid, ti1...tidsuch that for allk:S, ek |- sik ~ tik,si = sum_k(sik * nk), andti = sum_k(tik * nk).Linear combinations can encode outer sum:

e1 * e2is equivalent to$(e1 : size_S(e2), e2 : 1). However, outer sum is preferred because it’s more resilient to axis reordering. Changinge1 * e2toe2 * e1doesn’t require manual stride updates.

Sliding operations access overlapping data blocks, essential for convolutional neural networks. Consider a buffer of 9 elements representing a tensor with shape \(\{N=5, F=3\}\) where each row is a 3-element slice that slides one element at a time. The tensor element at \((N, F)\) maps to buffer index \(N + 2F\):

$$ \begin{array}{c|ccc} & F=0 & F=1 & F=2 \\ \hline N=0 & 0 & 2 & 4 \\ N=1 & 1 & 3 & 5 \\ N=2 & 2 & 4 & 6 \\ N=3 & 3 & 5 & 7 \\ N=4 & 4 & 6 & 8 \\ \end{array} $$

Note

In this sliding pattern, a single space index can map to multiple tensor indices. For example, space index

4maps to{4_N},{2_N, 1_F}, and{2_F}simultaneously. This illustrates the non-one-to-one nature of(S, e).maps(si, ti).

This can be expressed using a linear combination expression where the N axis has stride 1 and the F axis has stride 2, yielding a total size of 1 + (5-1)*1 + (3-1)*2 = 9.

Equivalent Mapping

Mappings E1 and E2 are equivalent when:

E1::SIZE == E2::SIZE- For every

i,E1::map(i) == E2::map(i)

The equivalence relation is reflexive, symmetric, and transitive. Examples:

- Identity of pairs: for every

E,Eis equivalent both tom![{ E }, 1]andm![1, { E }]. - Stride-modulo decomposition: for every

Ewhose sizeE::SIZEis divisible byn,Eandm![{ E } / n, { E } % n]are equivalent. - Pair projection: for every

AandB,m![[{ A }, { B }] / B::SIZE]is equivalent tom![A]andm![[{ A }, { B }] % B::SIZE]is equivalent tom![B]. - Associativity of pairs: for every

E1,E2,E3,m![{ E1 }, { E2 }, { E3 }],m![[{ E1 }, { E2 }], { E3 }], andm![{ E1 }, [{ E2 }, { E3 }]]are equivalent. - Idempotent operations: for every

E,Eis equivalent tom![{ E } / 1], tom![{ E } # E::SIZE], and tom![{ E } = E::SIZE]. - Modulo by 1: For every

E,m![E % 1]is equivalent to the identity mappingm![1].

Memory and Stream

The Mapping Expressions page covered host tensors, which use a single flat buffer.

Device tensors extend host tensors in two directions: storage adds spatial dimensions (chips, clusters, slices) to match the hardware hierarchy, while data flowing through the Tensor Unit pipeline takes a streaming form with Time and Packet dimensions.

Both arise from the same hardware distinction between static storage and pipeline flow.

HBM and SRAM

(See Formal Definition at the end of this page for the precise buffer-to-tensor correspondence.)

Device memory has multiple levels, each with its own geometry. Each level is represented as a separate type parameter, enabling spatial parallelism:

#![allow(unused)]

fn main() {

extern crate furiosa_visa_std;

use furiosa_visa_std::prelude::*;

use std::marker::PhantomData;

// Assumed throughout this page.

axes![A = 8, B = 512];

// HBM tensors

struct HbmTensor<D: Scalar, Chip: M, Element: M> {

/* ... */

_marker: PhantomData<(D, Chip, Element)>,

}

// SRAM tensors

// DM (Data Memory), TRF (Tensor Register File), and VRF (Vector Register File)

struct DmTensor<D: Scalar, Chip: M, Cluster: M, Slice: M, Element: M> {

/* ... */

_marker: PhantomData<(D, Chip, Cluster, Slice, Element)>,

}

struct TrfTensor<D: Scalar, Chip: M, Cluster: M, Slice: M, Row: M, Element: M> {

/* ... */

_marker: PhantomData<(D, Chip, Cluster, Slice, Row, Element)>,

}

struct VrfTensor<D: Scalar, Chip: M, Cluster: M, Slice: M, Element: M> {

/* ... */

_marker: PhantomData<(D, Chip, Cluster, Slice, Element)>,

}

}HBM tensors distribute data across chips for spatial parallelism (processing different data elements simultaneously on different hardware units).

For example, HbmTensor<bf16, m![A], m![B]> distributes 8 × 512 = 4096 elements across 8 chips with 512 elements per chip.

The i-th chip’s j-th element stores tensor index i![A = i, B = j].

SRAM tensor types add Cluster and Slice dimensions for finer-grained parallelism.

TrfTensor additionally has a Row dimension that distributes weight data across the 8 MAC rows per slice.

See Contraction Engine for details.

These tensor types assume all units at each level share the same mapping. The type parameters directly mirror the device structure, avoiding complex address calculations that would arise from flattening multi-dimensional storage into linear indices.

Alignment Constraint

Alignment constraints apply to the Element dimension: the starting address must be a multiple of size_of::<D>().

This ensures natural alignment for maximum throughput.

The Chip, Cluster, and Slice dimensions have no additional alignment constraints.

Size Constraint

Each dimension must fit within hardware limits. Each chip has 256MB of SRAM: 2 clusters × 256 slices × 512KB per slice. An 8-chip system provides 2GB total SRAM capacity.

All device tensor types share the following spatial constraints:

| Unit | Count | Constraint | Padding Required |

|---|---|---|---|

| Chip | System-dependent | Chip::SIZE == NUM_CHIPS | m![1 # NUM_CHIPS] |

| Cluster | 2 / Chip | Cluster::SIZE == 2 | m![1 # 2] |

| Slice | 256 / Cluster | Slice::SIZE == 256 | m![X / N # 256] |

Note

These exact-match constraints are a current limitation: the runtime operates at chip granularity (

#[device(chip = N)]), so partial chip or cluster usage is not yet supported. Use the#padding operator to fill unused positions. This may be relaxed in future releases.

The Element dimension varies by tensor type:

| Type | Unit | Constraint |

|---|---|---|

DmTensor | 512KB / Slice | Element::SIZE * size_of::<D>() <= 512KB |

TrfTensor | 8KB / Row | Row::SIZE <= 8, Element::SIZE * size_of::<D>() <= 8KB |

VrfTensor | 8KB / Slice | Element::SIZE * size_of::<D>() <= 8KB |

When a kernel uses fewer clusters than the hardware provides, the Cluster dimension is padded.

For example, a single-cluster kernel uses type Cluster = m![1 # 2], meaning 1 logical cluster padded to the hardware’s 2 clusters per chip.

A DmTensor<D, ..., Element> at address addr occupies addr..(addr + Element::SIZE * size_of::<D>()).

Tensor Unit Stream

While tensor data is stored in DM in a compact, storage-optimized layout, the Tensor Unit receives tensor data as streams of elements delivered over time.

The Packet dimension determines how many elements are delivered to the Tensor Unit in a single cycle.

Fetch Sequencers read DM data chunks and deliver a portion each clock cycle.

The Time dimension models this sequence of data delivery.

Unlike spatial dimensions that are constrained by hardware capacity, Time has no hardware-imposed size limit; it grows with the amount of data to process.

#![allow(unused)]

fn main() {

#![feature(adt_const_params)]

extern crate furiosa_visa_std;

use furiosa_visa_std::prelude::*;

use std::marker::ConstParamTy;

use std::marker::PhantomData;

axes![N = 4, C = 64, H = 32, W = 32];

/// Pipeline stage.

/// `Vector` is intentionally absent: the Vector Engine uses a separate typestate

/// (`VectorBranchTensor` and friends) that tracks branch, ALU, and other Vector-specific state.

/// `Commit` is intentionally absent: once the Commit Engine writes results back to DM,

/// the data is at rest and the type becomes `DmTensor`, not `StreamTensor`.

#[derive(PartialEq, Eq, ConstParamTy)]

enum Position {

Begin, // After the start of the pipeline

Fetch, // After the Fetch Engine

Switch, // After the Switch Engine

Collect, // After the Collect Engine

Contraction, // After the Contraction Engine

Reduce, // After the Reduce Engine

Cast, // After the Cast Engine

Transpose, // After the Transpose Engine

}

struct StreamTensor<

'l, // Lifetime tied to the Tensor Unit context

const P: Position,

D: Scalar,

Chip: M,

Cluster: M,

Slice: M,

Time: M,

Packet: M,

> {

/* ... */

_marker: PhantomData<&'l (D, Chip, Cluster, Slice, Time, Packet)>,

}

type T<'l> = StreamTensor<

'l,

{ Position::Fetch }, // Fetch Engine's output

bf16,

m![1], // Chip: single chip

m![1], // Cluster: single cluster

m![C / 2], // Slice: distribute 64 channels across 32 slices

m![N, H, W], // Time: iterate over batch (N) and spatial (H, W) dimensions

m![C % 2], // Packet: 2 channels per cycle

>;

}Type T streams a tensor with an aggregate shape of \(\{N=4, C=64, H=32, W=32\}\) across 32 slices (Slice::SIZE = m![C / 2]::SIZE = 32).

The Time dimension (m![N, H, W]) has size 4 * 32 * 32 = 4096, which means there are 4096 temporal iterations or cycles.

Each cycle, the Packet dimension m![C % 2] delivers 2 channels to each slice.

Since 32 slices operate in parallel, each cycle processes 32 * 2 = 64 channels total.

Formal Definition

The following formalizes the buffer-to-tensor correspondence described above for multi-dimensional storage.

For an HBM tensor holding tensor \(T\), the correspondence is:

- For every chip index

i, element indexj, and corresponding tensor indicesti,tj: - if

Chip::map(i) = Some(ti)andElement::map(j) = Some(tj), - then the

i-th chip’sj-th element stores the value of tensor \(T\) at indexti ∪ tj(the union of the two partial tensor indices).

The same principle extends to SRAM tensors: for a DmTensor, the correspondence additionally requires matching Cluster::map and Slice::map indices, with the tensor index being the union of all four partial indices.

TrfTensor further adds a Row index.

Stream tensors add Time and Packet dimensions: the Time dimension indexes which cycle delivers the data, and the Packet dimension indexes elements within a single cycle.

Tensor Functions

The preceding pages showed that the same tensor can live in different memory tiers with different mapping expressions. To reason about operations independently of physical layout, TCP models hardware operations as abstract functions on mathematical tensors, focusing on what data they produce rather than how it is physically arranged.

The function elementwise_add implements the mathematical operation \(f(T_1, T_2) = T_1 + T_2\):

- For every tensor \(T_1\) and \(T_2\),

- if

lhsholds \(T_1\) andrhsholds \(T_2\), - then the return value holds \(T_1 + T_2\).

#![allow(unused)]

fn main() {

extern crate furiosa_visa_std;

use furiosa_visa_std::prelude::*;

axes![A = 8, B = 512];

fn elementwise_add(

lhs: &HbmTensor<bf16, m![A], m![B]>,

rhs: &HbmTensor<bf16, m![A], m![B]>,

) -> HbmTensor<bf16, m![A], m![B]> {

// ... computes elementwise add ...

todo!("elementwise add lhs and rhs")

}

}The same reasoning applies to data movement: moving a tensor from one memory tier to another is also a tensor function, one that preserves the mathematical content while changing the physical representation.

The .to_dm() method implements the identity function on the mathematical tensor (not the physical representation), copying tensor \(T\) from HBM to on-chip Data Memory:

#![allow(unused)]

fn main() {

extern crate furiosa_visa_std;

use furiosa_visa_std::prelude::*;

axes![A = 8, B = 512];

fn hbm_to_dm(

ctx: &mut Context,

hbm: &HbmTensor<bf16, m![A], m![B]>,

) -> DmTensor<bf16, m![A], m![1], m![B / 2], m![B % 2]> {

hbm.to_dm(&mut ctx.tdma, 1 << 16) // 64KB offset

}

}Both the input HbmTensor and output DmTensor hold the same mathematical tensor \(T\), but in different memory tiers and with different mapping expressions.

This means correctness is defined at the tensor level: a function is correct if its output holds the right mathematical tensor, regardless of which mapping or memory tier is used.

Treating data movement as a function on tensors rather than as a copy of bytes makes it composable with compute operations in the same pipeline.

Moving Tensors

This chapter explains the three engines that move tensor data between memory tiers (HBM, DM, SPM) and the Tensor Unit: the Fetch Engine (DM to pipeline), the Commit Engine (pipeline to DM), and the DMA Engine (HBM/SPM to DM). Their APIs are designed around what you control: packet sizes, which engine moves each tensor, and how axes map to hardware dimensions. The compiler translates these declarations into low-level hardware concerns such as memory bank scheduling, stride calculation, and access alignment.

Device memory has two primary levels: off-chip HBM (High Bandwidth Memory) for high-capacity storage, and on-chip SRAM for low-latency working memory. SRAM is subdivided into DM (Data Memory) (the primary working memory), SPM (Scratchpad Memory) (a smaller high-speed buffer within each DM), TRF (Tensor Register File), and VRF (Vector Register File). Tensors are stored in these tiers in storage-optimized layouts. This chapter covers HBM, DM, and SPM (the tiers accessed by the DMA, Fetch, and Commit engines); TRF and VRF are loaded through the Tensor Unit pipeline and are covered in Computing Tensors.

flowchart TB

HBM[(HBM)] <--> DMA[DMA]

SPM[(SPM)] <--> DMA[DMA]

DMA <--> DM[(DM)]

subgraph TU[Tensor Unit]

direction TB

FE[Fetch] --> DOT1[...] --> CT[Contraction] --> VE[Vector] --> DOT2[...] --> CM[Commit]

end

DM -->|stream| FE

CM -->|stream| DM

click DMA "./dma-engine.html" "DMA Engine"

click FE "./fetch-engine.html" "Fetch Engine"

click CT "../computing-tensors/contraction-engine/index.html" "Contraction Engine"

click VE "../computing-tensors/vector-engine/index.html" "Vector Engine"

click CM "./commit-engine.html" "Commit Engine"

click TU "../computing-tensors/index.html" "Tensor Unit"

The Fetch engine converts DM storage layout into packet streams for the Tensor Unit; the Commit engine performs the reverse; the DMA engine converts between HBM and DM layouts. All three engines rely on Sequencers, a configuration abstraction that controls memory access patterns through nested-loop configurations, generating and consuming fixed-size packets for deterministic per-cycle transfers and aligned bank access. Memory Performance provides guidance on achieving optimal throughput.

Sequencers read DM at the Fetch Engine and write DM at the Commit Engine, converting between storage layout and stream format. The DMA Engine is a separate pipeline that moves data between HBM/SPM and DM independently of the Tensor Unit.

Sequencer

The Fetch and Commit Engines use sequencers to address DM; this page explains how sequencers work, including their configuration constraints and the failure cases that arise when those constraints are exceeded.

Sequencers convert between tensors in memory and packet streams: reading converts a memory buffer into a stream of packets, and writing performs the reverse.

Each packet is a fixed-size chunk delivered each clock cycle; its size is set by the Packet mapping dimension.

The DMA Engine chains a read and a write sequencer to move data between HBM/SPM and DM without intermediate buffers.

As a kernel writer, you control the Time and Packet type parameters, which determine iteration count and packet size; the compiler derives the register configuration and strides.

For performance implications of Packet choices, see Memory Performance.

Interface

To explain sequencer concepts, we use simplified types that capture the essential structure.

The actual API is introduced in later sections.

The read and write methods preserve tensor values while transforming between memory layout and stream format.

#![allow(unused)]

fn main() {

extern crate furiosa_visa_std;

use furiosa_visa_std::prelude::*;

use std::marker::PhantomData;

/// A tensor in a linear buffer with mapping `Buf`.

struct BufTensor<D: Scalar, Buf: M> {

/* ... */

_marker: PhantomData<(D, Buf)>,

}

/// A tensor in motion.

/// - `'l`: Lifetime tied to the source buffer, ensuring the stream cannot outlive its data.

/// - `Time`: Temporal mapping (iteration over time).

/// - `Packet`: Spatial mapping (contents of a single packet).

struct StreamTensor<'l, D: Scalar, Time: M, Packet: M> {

/* ... */

_marker: PhantomData<&'l (D, Time, Packet)>,

}

impl<D: Scalar, Buf: M> BufTensor<D, Buf> {

/// Reads a tensor from a linear buffer into a stream.

fn read<'l, Time: M, Packet: M>(&'l self) -> StreamTensor<'l, D, Time, Packet> {

// hardware implementation

unimplemented!()

}

/// Writes a stream of packets back into a linear buffer.

fn write<'l, Time: M, Packet: M>(&mut self, stream: StreamTensor<'l, D, Time, Packet>) {

// hardware implementation

unimplemented!()

}

}

}Examples

(The Configuration section below explains how the compiler derives these configurations from tensor mappings.)

#![allow(unused)]

fn main() {

extern crate furiosa_visa_std;

use furiosa_visa_std::prelude::*;

use std::marker::PhantomData;

struct BufTensor<D: Scalar, Buf: M>(PhantomData<(D, Buf)>);

struct StreamTensor<'l, D: Scalar, Time: M, Packet: M>(PhantomData<&'l (D, Time, Packet)>);

impl<D: Scalar, Buf: M> BufTensor<D, Buf> {

fn read<'l, Time: M, Packet: M>(&'l self) -> StreamTensor<'l, D, Time, Packet> { unimplemented!() }

fn write<'l, Time: M, Packet: M>(&mut self, stream: StreamTensor<'l, D, Time, Packet>) { let _ = stream; }

}

axes![A = 8, B = 512, N = 4, C = 3, H = 8, W = 8, T = 4, P = 4];

/// Strided access: read 8×512 tensor as 128 packets of 32 elements.

/// Time = m![A, B / 32] produces 8 * 16 = 128 time steps.

/// Packet = m![B % 32] delivers 32 consecutive elements per packet.

fn strided_read<'l>(

buf: &'l BufTensor<bf16, m![A, B]>,

) -> StreamTensor<'l, bf16, m![A, B / 32], m![B % 32]> {

buf.read() // Automatic type inference

}

/// Strided write: write 128 packets of 32 elements back to 8×512 tensor.

fn strided_write(

buf: &mut BufTensor<bf16, m![A, B]>,

stream: StreamTensor<bf16, m![A, B / 32], m![B % 32]>,

) {

buf.write(stream)

}

/// Axis reordering read: change traversal from [N, C, H, W] to [W, H, C, N].

/// Time = m![W, H, C, N] iterates in reversed axis order.

/// Packet = m![1] delivers single-element packets.

fn axis_reordering_read<'l>(

buf: &'l BufTensor<bf16, m![N, C, H, W]>,

) -> StreamTensor<'l, bf16, m![W, H, C, N], m![1]> {

buf.read()

}

/// Axis reordering write: write [W, H, C, N] stream back to [N, C, H, W] buffer.

fn axis_reordering_write(

buf: &mut BufTensor<bf16, m![N, C, H, W]>,

stream: StreamTensor<bf16, m![W, H, C, N], m![1]>,

) {

buf.write(stream)

}

/// Tiling read: break axes into sub-blocks for cache efficiency.

/// Time = m![A % 2, B % 4, A / 2, B / 4] tiles A into 2 × 4, B into 4 × 128 blocks.

/// Packet = m![C # 32] pads C to 32 elements per packet.

fn tiling_read<'l>(

buf: &'l BufTensor<i8, m![A, B, C # 8]>,

) -> StreamTensor<'l, i8, m![A % 2, B % 4, A / 2, B / 4], m![C # 32]> {

buf.read()

}

/// Tiling write: write tiled stream back to buffer.

fn tiling_write(

buf: &mut BufTensor<i8, m![A, B, C # 8]>,

stream: StreamTensor<i8, m![A % 2, B % 4, A / 2, B / 4], m![C # 32]>,

) {

buf.write(stream)

}

/// Broadcasting read: replicate elements using stride 0.

/// Time = m![T, A] broadcasts T temporally (same data repeated T times).

/// Packet = m![P] broadcasts P spatially (same element fills packet).

fn broadcasting_read<'l>(

buf: &'l BufTensor<i8, m![A]>,

) -> StreamTensor<'l, i8, m![T, A], m![P]> {

buf.read()

}

/// Broadcasting write: write broadcast stream back to buffer.

fn broadcasting_write(

buf: &mut BufTensor<i8, m![A]>,

stream: StreamTensor<i8, m![T, A], m![P]>,

) {

buf.write(stream)

}

}Configuration

The compiler translates input and output tensor mappings into nested-loop configurations that the sequencer hardware executes.

Each configuration has the form [size_0 : stride_0, size_1 : stride_1, ...] : packet_size, where each entry’s subscript corresponds to its position in the loop nest (0 = outermost), represented by the following Rust type:

#![allow(unused)]

fn main() {

struct Config {

/// Each entry defines a nested loop level.

entries: Vec<Entry>,

/// Number of elements per hardware fetch.

packet_size: usize,

}

struct Entry {

/// Number of iterations for this loop level.

size: usize,

/// Memory address distance (in elements) to skip after each iteration.

stride: isize,

}

}Each entry encodes one dimension of tensor traversal.

The size field determines how many times this loop iterates, while the stride field determines the memory offset between consecutive iterations.

Together, entries form nested loops that traverse memory.

Example: [N, C, H, W] ↔ [W, H, C, N]

#![allow(unused)]

fn main() {

extern crate furiosa_visa_std;

use furiosa_visa_std::prelude::*;

use std::marker::PhantomData;

struct BufTensor<D: Scalar, Buf: M>(PhantomData<(D, Buf)>);

struct StreamTensor<'l, D: Scalar, Time: M, Packet: M>(PhantomData<&'l (D, Time, Packet)>);

impl<D: Scalar, Buf: M> BufTensor<D, Buf> {

fn read<'l, Time: M, Packet: M>(&'l self) -> StreamTensor<'l, D, Time, Packet> { unimplemented!() }

fn write<'l, Time: M, Packet: M>(&mut self, stream: StreamTensor<'l, D, Time, Packet>) { let _ = stream; }

}

struct Config {

entries: Vec<Entry>,

packet_size: usize,

}

struct Entry {

size: usize,

stride: isize,

}

axes![N = 4, C = 3, H = 8, W = 8];

fn read_nchw_whcn(buf: &BufTensor<bf16, m![N, C, H, W]>) ->

StreamTensor<bf16, m![W, H, C, N], m![1]> {

// Compiler-generated configuration: [8 : 1, 8 : 8, 3 : 64, 4 : 192] : 1

let config = Config {

entries: vec![

Entry { size: 8, stride: 1 }, // W

Entry { size: 8, stride: 8 }, // H

Entry { size: 3, stride: 64 }, // C

Entry { size: 4, stride: 192 }, // N

],

packet_size: 1,

};

// The hardware executes the configuration as nested loops:

for w in 0..8 {

for h in 0..8 {

for c in 0..3 {

for n in 0..4 {

// Read each address

let addr = 1 * w + 8 * h + 64 * c + 192 * n;

// yield buf[addr];

}

}

}

}

buf.read()

}